La mayoría de los equipos de seguridad dan por sentado que se darán cuenta cuando una identidad se vea comprometida. Contamos con las herramientas necesarias. Se activará una alerta. Fallará un control. Algo indicará claramente que un atacante ha conseguido acceder al sistema. De hecho, el 80 % de los responsables de la seguridad creen que sus herramientas ofrecen una protección adecuada encloud híbridos ycloud .

En la práctica, rara vez funciona así.

Recientemente, un cliente encontró credenciales corporativas válidas a la venta en la dark web por 6 dólares. Esto no formaba parte de un ataque dirigido. Se trataba simplemente de una entrada más en una larga lista de cuentas robadas. Ahora que los atacantes utilizan agentes de inteligencia artificial para automatizar la obtención y validación de credenciales, se generan, prueban y revenden accesos de forma continua y a gran escala.

Los atacantes conocen esta dinámica. Y cada vez más, la aprovechan.

En la mayoría de los entornos, el robo de identidad no se detecta de inmediato. El mayor reto hoy en día no es detener los ataques basados en la identidad, sino darse cuenta de que ya se han producido —y detectarlos puede resultar más difícil de lo que pensamos.

- Realidad: Todos los ataques híbridos acaban convirtiéndose en ataques de suplantación de identidad. A pesar de los millones que se invierten en seguridad, el 90 % de las organizaciones ha sufrido alguno.

- Dato: el 31 % de los usuarios son cuentas de servicio con amplios privilegios de acceso y escasa visibilidad, y una sola configuración errónea de Active Directory puede generar, de media, 109 administradores ocultos.

- Dato: El 90 % de las empresas que sufren ataques a la identidad contaban con autenticación multifactorial (MFA).

El problema no hace más que empeorar.

Ya no nos limitamos a proteger las identidades humanas. Las identidades no humanas (cuentas de servicio, API, cargas de trabajo y, cada vez más, agentes de IA) están proliferando rápidamente, superando a menudo en número a los seres humanos. Funcionan de forma continua, se autentican mediante procesos programados e interactúan entre sistemas a la velocidad de las máquinas. Al mismo tiempo, los atacantes están utilizando la IA para ampliar el alcance de sus ataques y camuflarse entre el comportamiento normal de las identidades más rápido que nunca.

El resultado: más identidades, menos visibilidad y ataques que se propagan más rápido que los sistemas de detección tradicionales.

El silencio es señal de que la identidad ha sido comprometida

Una visibilidad limitada de la actividad relacionada con la identidad aumenta el riesgo de pasar por alto una violación de seguridad.

Las empresas modernas abarcan cloud, el SaaS, las redes y el acceso remoto. Las identidades se mueven con fluidez entre todos estos entornos. Sin embargo, la mayoría de las soluciones de seguridad no lo hacen. La visibilidad sigue estando fragmentada, lo que crea brechas en las que los atacantes actúan sin ser detectados.

Que todo parezca estar en orden no significa que estemos a salvo. Puede significar simplemente que no vemos lo que está pasando. Esta brecha se amplía en los entornos impulsados por la IA. A medida que los agentes de IA y los flujos de automatización acceden continuamente a los sistemas, la actividad de las identidades aumenta exponencialmente, lo que dificulta distinguir el comportamiento normal del malicioso. A la velocidad de las máquinas, los puntos ciegos se multiplican.

La filtración de datos de identidad se detecta tras iniciar sesión

La seguridad se ha centrado durante mucho tiempo en aspectos relacionados con el acceso, como las contraseñas, la autenticación multifactorial (MFA) y los flujos de autenticación. Sin embargo, los atacantes se han adaptado. Una vez dentro, se comportan como usuarios legítimos.

La señal real no es el inicio de sesión, sino lo que ocurre después.

En muchos casos, la primera señal visible no tiene nada que ver con la autenticación. Se trata de un usuario que consulta sistemas desconocidos, accede a API de administración o solicita tickets de Kerberos en varios hosts. Cada acción es válida por sí sola. En conjunto, revelan un movimiento lateral.

Patrones de acceso inusuales, interacciones inesperadas del sistema y accesos repentinos a los datos. Se trata de indicadores importantes que, sin embargo, a menudo quedan fuera del alcance de los controles tradicionales.

La actividad maliciosa parece legítima

Los atacantes no se cuelan en los sistemas. Se conectan.

En recientes incidentes de seguridad relacionados con el SaaS, los atacantes no robaron contraseñas. Robaron tokens de autenticación de una integración de terceros y los reutilizaron. Sin solicitud de inicio de sesión. Sin autenticación multifactorial. Solo sesiones válidas. Desde el punto de vista del sistema, todo parecía legítimo.

Al utilizar credenciales válidas, los atacantes se camuflan entre las operaciones normales, aprovechando los permisos existentes, moviéndose por vías de confianza y evitando activar las alertas. Esto es especialmente cierto en el caso de las identidades no humanas, que a menudo cuentan con privilegios elevados pero están sometidas a un escaso control de su comportamiento. No utilizan la autenticación multifactorial (MFA), operan de forma continua y son más difíciles de validar, lo que facilita su uso indebido. A medida que crece la adopción de la IA, también lo hace esta superficie de ataque.

En un patrón habitual, los atacantes obtienen una clave API o una cuenta de servicio de larga duración vinculada a un canal de datos. La identidad se comporta como se espera: extrae datos, accede al almacenamiento y llama a las API, pero con diferencias sutiles, como conjuntos de datos, tiempos o destinos ligeramente distintos. No se detectan anomalías en el inicio de sesión, solo cambios en el comportamiento.

Lo «normal» se convierte en el disfraz perfecto.

La prevención no es lo mismo que la detección

Dependemos en gran medida de controles como la autenticación multifactorial (MFA) y las soluciones de detección y respuesta ante amenazas (EDR). Aunque son esenciales, no se diseñaron para detectar el robo de identidad, especialmente en los ataques basados en inteligencia artificial.

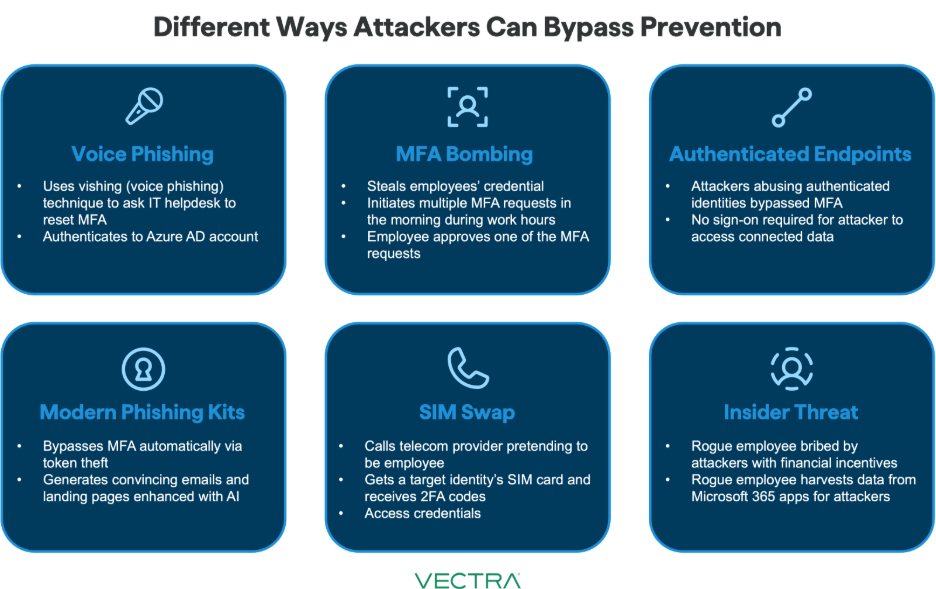

Los atacantes pueden eludir la autenticación de dos factores (MFA) mediante técnicas de phishing, ingeniería social o dispositivos comprometidos, y luego actuar fuera del alcance de la visibilidad de los puntos finales utilizando un acceso legítimo.

phishing de tipo «adversario en el medio» ahora gestionan la autenticación en tiempo real. El usuario completa la autenticación multifactorial (MFA), el atacante captura el token de sesión y lo reutiliza de inmediato. A partir de ese momento, el atacante actúa como un usuario totalmente autenticado. Sin intentos fallidos de inicio de sesión. Sin ataques de fuerza bruta. Solo una sesión válida.

La idea de que los controles pondrán de manifiesto cualquier vulneración es errónea. En realidad, fallan de forma silenciosa. A medida que los atacantes aprovechan la inteligencia artificial para automatizar el uso indebido de identidades, la brecha entre la prevención y la detección sigue aumentando.

Las señales están ahí... pero desconectadas

Las pistas que indican un robo de identidad sí existen. Pero están dispersas.

Una anomalía en la autenticación en una herramienta. Actividad de red sospechosa en otra. Patrones Cloud en una tercera. Sin correlación, estas señales permanecen aisladas y no son concluyentes, lo que genera ruido en lugar de claridad.

Esta fragmentación se agrava en entornos basados en la inteligencia artificial, donde la identidad abarca más sistemas, se mueve más rápido y genera más datos de los que los analistas pueden correlacionar de forma realista.

Por ejemplo, un usuario inicia sesión desde una nueva ubicación. Unos minutos más tarde, esa identidad genera un tráfico SMB inusual. Poco después, accede a cloud desconocido. Cada evento parece de bajo riesgo por sí solo y cuando se analiza en herramientas independientes. Solo cuando se relacionan entre sí la identidad, la red y cloud , el ataque cloud hace evidente.

Reconsiderar cómo detectamos el robo de identidad

La cuestión no es si se están produciendo filtraciones de identidad, sino si somos capaces de detectarlas.

En el ámbito de la inteligencia artificial empresarial, el robo de identidades es más frecuente, más difícil de detectar y se lleva a cabo con mayor rapidez. Más identidades. Más automatización. Más velocidad. Más oportunidades para que los atacantes pasen desapercibidos.

Para salvar esta brecha, debemos cambiar nuestra forma de abordar la detección de identidades:

- Asume que la filtración es inevitable y céntrate en localizar a los atacantes que ya se encuentran dentro

- Considera las identidades como entidades que actúan, no solo como credenciales

- Busca patrones y movimientos anómalos, no solo anomalías en la autenticación

- Aúna la actividad relacionada con la identidad, la red y cloud en una vista unificada

- Dar prioridad a las señales de alta fiabilidad que indiquen la intención de un atacante

Porque el atacante más peligroso no es el que intenta entrar, sino el que ya lo ha hecho y parece que forma parte del lugar.

Más información sobre el enfoque Vectra AIrespecto a los ataques basados en la identidad: https://youtu.be/ytWOynLTAco

.jpg)