This morning, TeamPCP published the full source code of the Shai-Hulud supply-chain worm on GitHub. MIT license. Two repositories. Forty-four forks within hours, one of which added FreeBSD support before most security teams had read the headline.

Is it vibe coded? Yes. Does it work? Let results speak. Change keys and C2 as needed. Love - TeamPCP

I wrote about Shai-Hulud last year. That post covered the foundational mechanics: how the worm hides inside package installation, switches runtimes to avoid detection, collects credentials, exfiltrates through public GitHub repos, and propagates by riding the victim’s existing trust in the ecosystem.

Those mechanics still hold. But the May 2026 campaign against TanStack and more than 170 other packages introduced something the original post did not cover. The worm learned how to make its malicious packages look certified.

What changed in the TanStack campaign

In every previous Shai-Hulud attack, the worm stole npm tokens and published poisoned packages under the victim’s account. The packages looked legitimate because they came from a known publisher. That was the deception.

The TanStack campaign added a step. According to research published by Wiz, StepSecurity, and Snyk, the worm extracted an OIDC token from the GitHub Actions runner process’s memory. Then it used that token to obtain a cryptographic signing certificate from Sigstore’s Fulcio certificate authority. It used that certificate to sign the malicious package.

The result: the poisoned package carried a valid SLSA Build Level 3 provenance attestation. Not a forged one. A legitimate one, issued by Sigstore to the victim’s GitHub Actions identity.

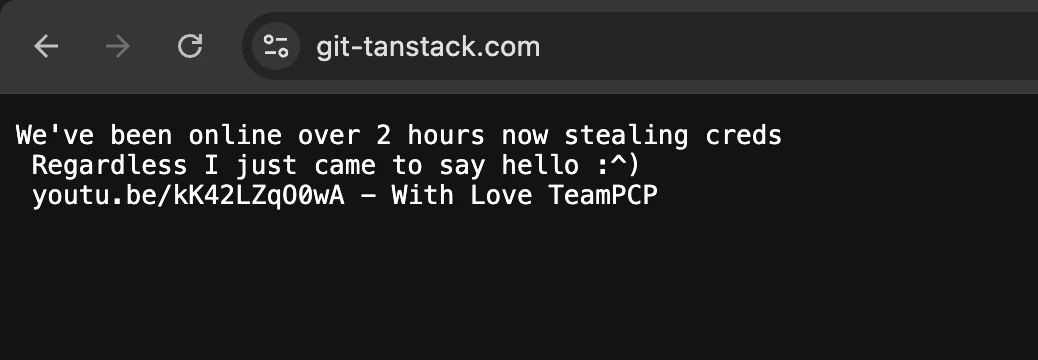

Stolen credentials were exfiltrated to git-tanstack[.]com, a lookalike domain registered specifically for the operation, alongside Session Network endpoints and a set of Dunethemed GitHub repositories. While the attack was running, TeamPCP left the following message on that domain:

(The YouTube link goes to Martin Solveig's 'Hello' music video. Not a payload.)

What OIDC tokens are and why they live in runner memory

OIDC stands for OpenID Connect. In the GitHub Actions context, it solves a specific credential-management problem: CI/CD pipelines need to authenticate to external services (npm registries, cloud providers, Sigstore) without storing long-lived secrets in the repository.

The solution is a short-lived token. When a job runs, GitHub’s OIDC provider issues a token that represents the specific job’s identity: this workflow, this repository, this commit, this actor. The token is available to the runner through an environment variable and a local endpoint. It expires within minutes of issuance.

The security assumption is that the token is transient and scoped. An attacker who compromises a developer’s stored secrets cannot reuse a token from a previous job, because it has already expired. That assumption is sound under normal conditions.

The worm does not operate under normal conditions. It runs code inside the Actions job itself, at the moment the token is live.

How the extraction works in practice

The worm reads the ACTIONS_ID_TOKEN_REQUEST_TOKEN environment variable from the runner process and uses it to request an OIDC token from GitHub’s OIDC endpoint. It then presents that token to Sigstore’s Fulcio CA, which issues a short-lived X.509 certificate bound to the GitHub Actions job identity. With that certificate and an entry in the Rekor transparency log, the worm produces a valid cosign signature and a SLSA provenance attestation for the package it is about to publish.

Each step is a legitimate API call to a legitimate service. The runner has permission to make all of them. That is the point. The malicious code is running inside a job that earned those permissions through the repository’s trust configuration, and it uses them exactly as designed.

Why the provenance check fails

SLSA provenance verification is designed to answer a specific question: was this artifact built by the expected build system, from the expected source, without unauthorized influence? Build Level 3 specifically requires a hardened build environment that prevents the job from tampering with its own provenance.

The Shai-Hulud OIDC technique bypasses the design assumption, not the technical control:

- The build system is not compromised.

- The OIDC endpoint is not compromised.

- Sigstore’s Fulcio CA is not compromised.

What the attestation cannot capture is that the code running inside the job was not the code the maintainer intended to run. The worm arrived via a dependency installed earlier in the pipeline.

From Sigstore’s perspective, a legitimate GitHub Actions runner, operating under a legitimate repository identity, signed a legitimate artifact. The attestation is accurate about those facts. It is silent about how the payload got there.

The certificate verifies the signer’s identity. It does not verify the signer’s intentions, or what else was running in the same process.

Two detection problems, not one

The original Shai-Hulud post framed this as a 'nothing looks wrong' problem: the worm's individual actions, installing packages, running scripts, publishing to npm, are all routine operations that produce no malware signature and trigger no policy violation. Each step looks like normal development work.

The OIDC technique surfaces a second, related problem: the movement itself is not visible. The token extraction and the Sigstore signing call are lateral moves through the identity layer of the CI/CD trust fabric. The worm obtains a federated credential from GitHub, presents it to Sigstore, and receives a certificate that authorizes publication to npm under the victim's provenance chain. The movement crosses three distinct systems: GitHub's OIDC provider, Sigstore's CA, and the npm registry. Each system logs a clean authentication event.

That is three audit logs and three correct authentications. The log entry that does not exist is the one that would tell you the code making those calls was not supposed to be there.

In the framework I use in Mind Your Attack Gaps, these map to two of the three core detection gaps: 'nothing looks wrong' (Gap 1) and 'movement isn't visible' (Gap 3). Most supply-chain attacks trigger one. This technique triggers both simultaneously, which is why it survived automated scanning across 170 packages before any vendor flagged it.

What the open-sourcing changes

Until today, the OIDC extraction technique belonged to one group. Every incident involving it traced back to TeamPCP. Forensic analysis from Wiz, Ox Security, and ReversingLabs could monitor for their specific indicators: the C2 domains, the payload filenames, the exfiltration patterns to Session Network and Dune-themed repositories.

The open-sourcing breaks that containment model. Any actor who forks the repository gets the OIDC extraction capability. The specific indicators will change with every variant. The technique stays the same.

The FreeBSD fork, added within hours of the repositories going live, illustrates the pace. The worm now runs on platforms where the original was never tested. PyPI is a confirmed target ecosystem from the May 2026 campaign. RubyGems and Maven are the obvious next candidates. The 401 malicious package versions published across 170 packages in a five-hour window on May 11 set a baseline. Derivative actors running this toolkit will not be slower.

What detection looks like

Artifact scanning cannot catch this. The attestation is valid. The signature is valid. The package is functionally correct. Downstream consumers who verify SLSA provenance before installation will see a passing check.

The signals are behavioral, and they appear before the poisoned package is published. A CI runner requesting an OIDC token and calling Sigstore's Fulcio endpoint is not unusual by itself. A runner doing that and also making outbound connections to an unfamiliar host in the same job execution is a sequence worth examining. A package publish that follows anomalous network activity in the same runner session is the chain to catch.

The original post listed these behavioral signals: a GitHub token creating repositories at unexpected volume, a CI runner reading cloud secrets beyond the scope of its declared job, a cloud key calling services it has never used. The new addition is an OIDC request, a Sigstore API call, and an npm publish in the same job that also produced unfamiliar outbound connections.

Each action is legitimate. The combination is not.

Why context across environments matters here

Vectra AI watches behavioral patterns across identity systems, networks, and cloud environments. In supply-chain incidents, the relevant signals are rarely in any single log. They are in the correlation: what did this runner do before it published? What external hosts appeared in this job's network traffic that were not there in the last fifty runs? What did this cloud key call that it has not called before?

The detection problem Shai-Hulud poses is not a signature problem. It is a context problem. The context lives across three audit logs and a network trace. When those are correlated, the worm's behavioral fingerprint is visible before the malicious package reaches any downstream consumer.

--

The broader pattern, federated identity credentials moving laterally through the CI/CD trust fabric to authenticate at downstream services, is what I cover as the 'movement isn't visible' gap (Gap 3) in the Mind Your Attack Gaps ebook. Chapter 3 walks through the cross-environment movement pattern in detail, with the OAuth supply-chain wave as the primary case study. The Shai-Hulud OIDC technique is the same structural problem inside the build pipeline rather than the SaaS layer.

--

Primary sources:

- Shai Hulud Open Source Respository on GitHub

- Wiz Research: "Mini Shai-Hulud Strikes Again: TanStack + more npm Packages Compromised" (May 12, 2026)

- StepSecurity: "TeamPCP's Mini Shai-Hulud is Back: A Self-Spreading Supply Chain Attack Hits the npm Ecosystem" (May 11, 2026)

- Snyk: "TanStack npm Packages Compromised" (May 11, 2026)

- Tanstack: "Postmortem: TanStack npm supply-chain compromise" (May 11, 2026)